|

|

|

Demonstration is located at: http://www.se.cuhk.edu.hk/TTVS.

|

LinLin : A Cantonese Text-to-Visual Speech Synthesizer

|

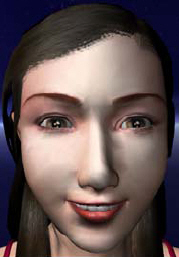

We have participated in the development of a virtual talking agent, LinLin. The agent accepts Chinese textual input and generates in real time a three-dimensional (3D) talking face that is lip-synchronized to Cantonese synthesized speech. LinLin has a 3D face model that consists of many position coordinates and we have defined 16 static visemes based on this model. 3D face rendering is achieved by a blending technique that computes linear combinations of the static visemes to effect smooth transitions.

Lip-synchronization follows the syllable initial/final durations of the synthesized speech. LinLin can also display blinking, head movements, facial emotions (where “happy” and “worry” are static models with a smile and medially upturned eyebrows respectively), as well as gestures with her hands.

|

LinLin with different facial emotions ("happy" left, "worry" right)

|

LinLin was selected to represent Chinese University of Hong Kong in the Challenge Cup Competition 2003 – a biennial competition where over two hundred universities across China compete with their science and technology projects. LinLin was awarded Level 2 Prize in this competition.

|

Related Publication:

-

Wang, J. Q., Wong, K. H., Heng, P. A., Meng, H., Wong, T. T., "A Real-Time Cantonese Text-to-Audiovisual Speech Synthesizer", International Conference on Acoustics, Speech and Signal Processing (ICASSP), Fairmont Queen Elizabeth Hotel, Montreal, Quebec, Canada, 17-21 May 2004

|

|