Research

Our research spans the field of signal processing and optimization for machine learning, focusing on theoretically justifiable methods that are practical.

Low Pass Graph Signal Processing and Graph Learning

|

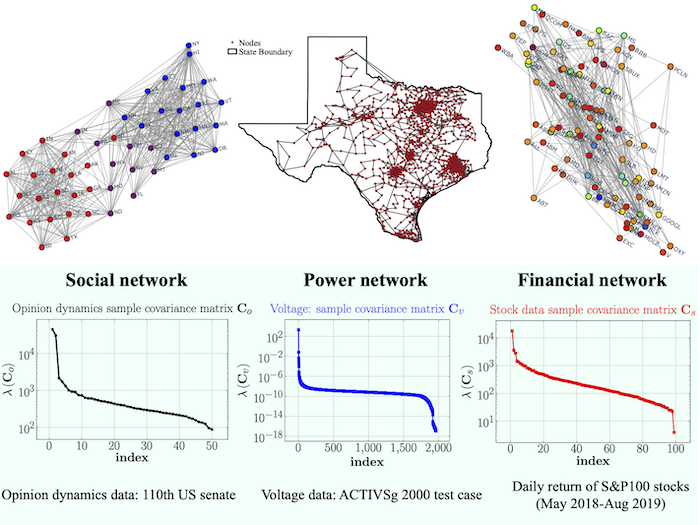

A common task in data science is to learn a graph representation from real-life data, which is to be utilized for downstream decision making. Our research focuses on building graph learning tools based on graph signal processing (GSP) inspired data models with “low pass” filtering. In other words, the purported data generative model consists of a graph filter which only retains the low frequencies content, i.e., effectively implying the common notion of “smooth graph signals”. Such low pass GSP models are prevalence in dynamics for social networks, financial networks, etc.

The low pass GSP signals entail two important properties: (i) smoothness, (ii) low rankness. Our works show that the spectral properties of such low pass graph signals can be leveraged for graph topology learning from nodal observation. Recently, we have applied these techniques to the (a) detection of low pass graph signals, (b) multiplex graph learning, (c) graph topology learning from partial observation, etc.

Selected Publications:

R. Ramakrishna, H.-T. Wai, A. Scaglione, “A User Guide to Low-Pass Graph Signal Processing and its Applications”, IEEE Signal Processing Magazine, 2020.

Y. He, H.-T. Wai, “Online Inference for Mixture Model of Streaming Graph Signals with Sparse Excitation”, IEEE Transactions on Signal Processing, 2023.

Y. He, H.-T. Wai, “Detecting Central Nodes from Low-rank Excited Graph Signals via Structured Factor Analysis”, IEEE Transactions on Signal Processing, 2022.

H.-T. Wai, Y. Eldar, A. Ozdaglar, A. Scaglione , “Community Inference from Partially Observed Graph Signals: Algorithms and Analysis”, IEEE Transactions on Signal Processing, 2022.

H.-T. Wai, S. Segarra, A. Ozdaglar, A. Scaglione, and A. Jadbabaie, “Blind community detection from low-rank excitations of a graph filter”, IEEE Transactions on Signal Processing, 2020.

C. Zhang and H.-T. Wai, “Learning Multiplex Graph with Inter-layer Coupling”, in IEEE ICASSP 2024.

Checkout the publications for more details, as well as the Slides for a plenary talk (Youtube) I have given at the GSP Workshop 2023.

Decision-dependent Stochastic Approximation Algorithms

|

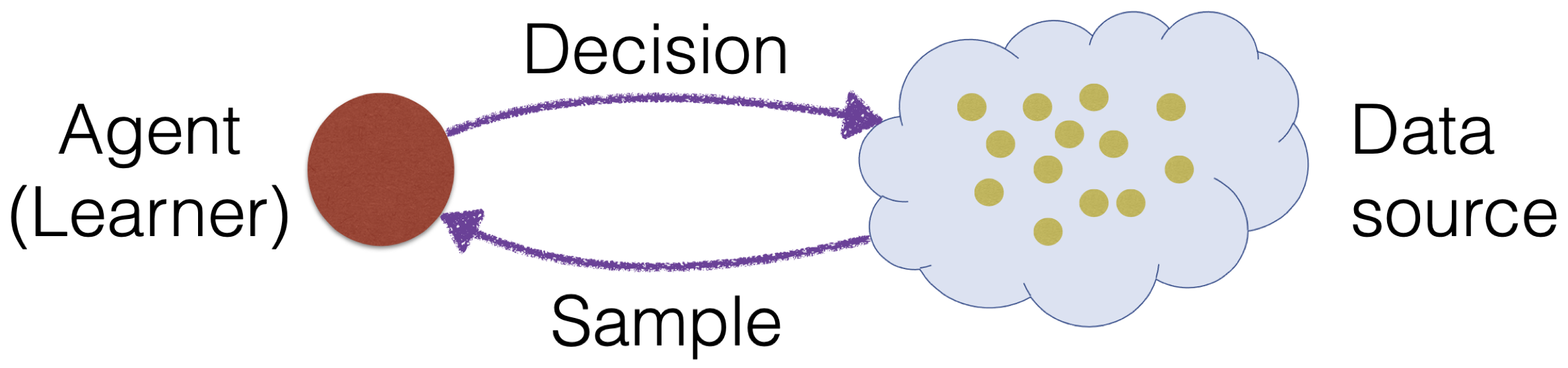

Stochastic approximation (SA) is the working horse behind many online algorithms relying on streaming data, and it has found applications in reinforcement learning and statistical learning. Our works have considered a setting in which the streaming data is not i.i.d., but is correlated and decision dependent.

Our research hinges on the analysis of SA schemes with an update procedure whose drift term depends on a decision-dependent Markov chain and the mean field is not necessarily a gradient map, leading to asymptotic bias for the one-step updates. The framework is further extended to other problems: (a) policy optimization whose decision, i.e., policy, affects future state generation, (b) performative prediction whose decision vector is used to support the future predictions and samples, (c) for bi-level optimization whose leader optimizes a decision using samples or gradient information supported by a follower solving a lower level optimization problem.

Selected Publications:

A. Dieuleveut, G. Fort, E. Moulines, H.-T. Wai, “Stochastic Approximation Beyond Gradient for Signal Processing and Machine Learning”, IEEE Transactions on Signal Processing (Overview Paper), 2023.

M. Hong, H.-T. Wai, Z. Yang, Z. Wang, “A two-timescale framework for bilevel optimization: Complexity analysis and application to actor-critic”, SIAM Journal on Optimization, 2023.

X. Wang, C.-Y. Yau, H.-T. Wai, “Network Effects on Performative Prediction Games”, in ICML 2023.

Q. Li, C.-Y. Yau, H.-T. Wai, “Multi-agent Performative Prediction with Greedy Deployment and Consensus Seeking Agents”, in NeurIPS 2022.

Q. Li, H.-T. Wai, “State Dependent Performative Prediction with Stochastic Approximation”, in AISTATS 2022.

A. Durmus, E. Moulines, A. Naumov, S. Samsonov, H.-T. Wai, “On the Stability of Random Matrix Product with Markovian Noise: Application to Linear Stochastic Approximation and TD Learning”, in COLT 2021.

M. Kaledin, E. Moulines, A. Naumov, V. Tadic and H.-T. Wai, “Finite Time Analysis of Linear Two-timescale Stochastic Approximation with Markovian Noise”, in COLT 2020.

B. Karimi, B. Miasojedow, E. Moulines and H.-T. Wai, “Non-asymptotic Analysis of Biased Stochastic Approximation Scheme”, in COLT 2019.

Checkout the publications for more details, as well as some Slides from a talk I have given at URJC, Spain.

Optimization for Large-scale Machine Learning and Signal Processing

|

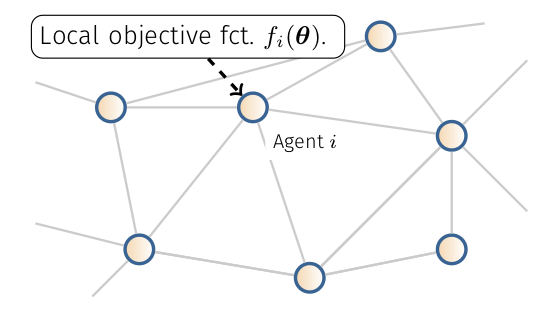

We study algorithms for large scale machine learning and information processing in order to handle the challenges with ‘big-data‘. We focus on two interconnected aspects: first we study algorithms that run on inter-connected agents’ system such that the computation burden can be distributed evenly across the network; second we study algorithms that feed on stochastic (e.g., streaming or dynamical) data. In both cases, we aim to provide rigorous analysis on the performance with convex and non-convex optimization models. Recently, we consider communication efficient design that “robustly” work on physical networks with non-ideal communication architecture.

Selected Publications:

T.-H. Chang, M. Hong, H.-T. Wai, X. Zhang, S. Lu, “Distributed Learning in the Non-Convex World: From Batch to Streaming Data, and Beyond”, IEEE Signal Processing Magazine, May, 2020.

C.-Y. Yau, H.-T. Wai, “DoCoM: Compressed Decentralized Optimization with Near-Optimal Sample Complexity”, Transactions on Machine Learning Research, 2023.

B. Turan, C. A. Uribe, H.-T. Wai, M. Alizadeh , “Resilient Primal-Dual Optimization Algorithms for Distributed Resource Allocation”, IEEE Transactions on Control of Networked Systems, 2020.

H.-T. Wai, W. Shi, C. A. Uribe, A. Nedic, A. Scaglione, “Accelerating incremental gradient optimization with curvature information”, Computational Optimization and Applications, 2020.

X. Wang, Y. Jiao, H.-T. Wai, Y. Gu, “Incremental Aggregated Riemannian Gradient Method for Distributed PCA”, in AISTATS 2023.

B. Karimi, H.-T. Wai, E. Moulines and M. Lavielle, “On the Global Convergence of (Fast) Incremental Expectation Maximization Methods”, in NeurIPS 2019.

C.-Y. Yau, H.-T. Wai, “Fully Stochastic Distributed Convex Optimization on Time-Varying Graph with Compression”, in CDC 2023.

Checkout the publications for more details.